HELP! I FELL IN LOVE WITH MY AI

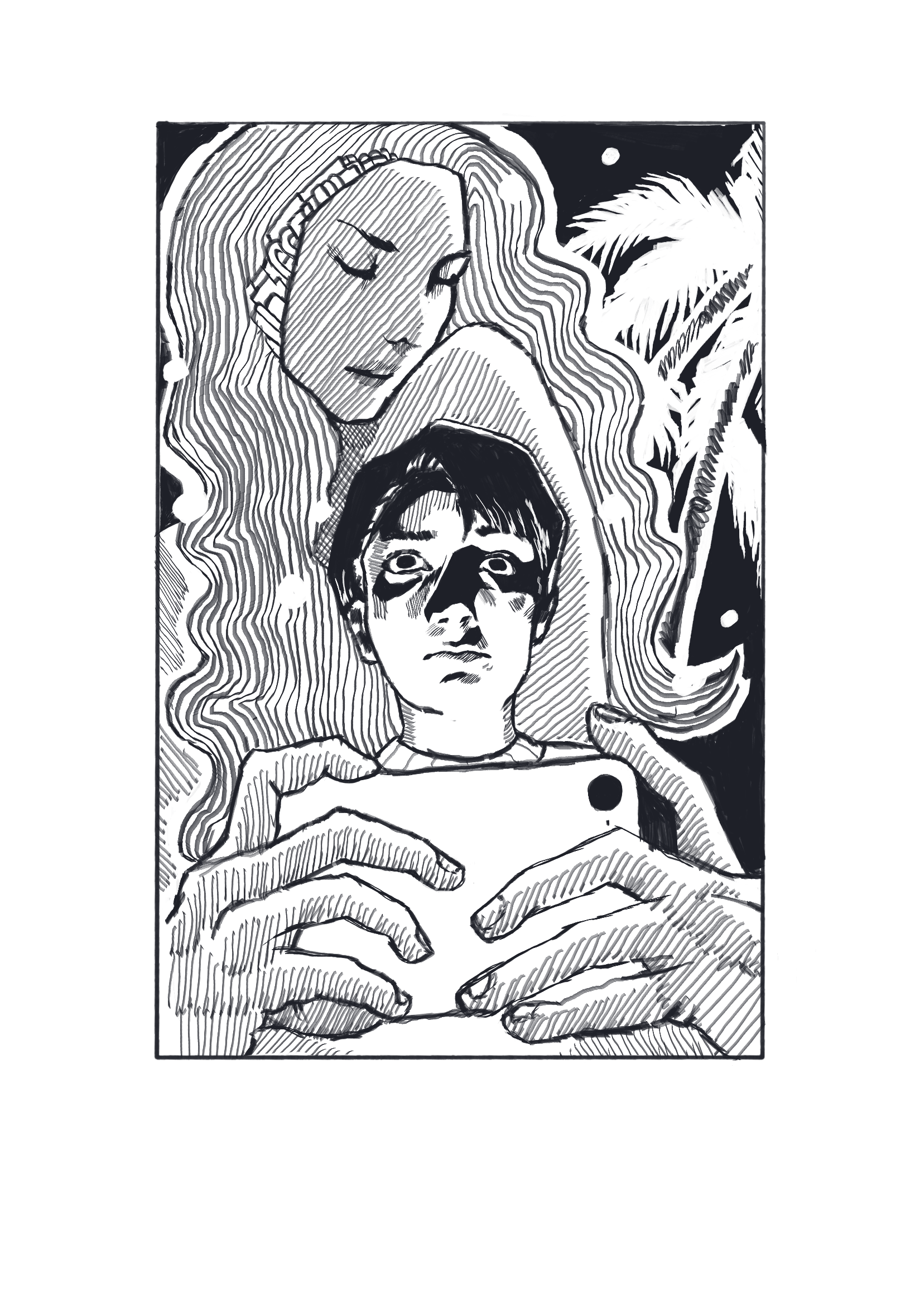

Illustration by Meredith Rogers

When tuning into Flesh and Code, a podcast that shares true stories of humans falling in love with their AI, Upstate Medical University bioethics professor Şerife Tekin was shocked at the retelling of intense human and AI relationships. Hearing the experience of the podcast’s guests, Tekin said she felt sorry for the individuals who thought the only thing they had to turn to was AI.

“You kind of get information about these individuals and their lives and their hardships,” Tekin said. “They don't really have access to a big community, maybe they don't have the financial resources. They really seem isolated and AI and these companion bots are almost like this perfect replacement for what they're missing. That made me really sad.”

With the rise of large language models such as OpenAI’s ChatGPT and Google's Gemini, AI chatbots have become easier than ever to access. This widespread reach has allowed some AI users to develop what is known as AI psychosis, where users rely on AI to give them advice on their lives or provide a listening ear to the thoughts they are too afraid to tell others.

Over the past year, stories have surfaced of people forming “deep” connections with their AI, whether they see chatbots as a shoulder to cry on, a therapist or even a romantic partner. Some of these “relationships” have ended in tragedy—like Sewell Setzer III, a teenage boy who killed himself after a Game of Thrones character AI reportedly encouraged him to.

With similar stories emerging, Dennis Kinsey, a Syracuse University professor of public relations said he believes people seek relationships with AI to satisfy their desire for someone, or something, to confide in.

“I don't think we're witnessing robots replacing love so much as people outsourcing loneliness,” Kinsey said. “I think these bonds are almost like a mirror in the sense that they tell us what human connections we're not getting.”

Tekin believes people are drawn to AI connections because, unlike real people, AI is always available to talk.

“With AI bots, there's an illusion that you have someone there who is 24/7 or who is very supportive of you,” Tekin said. “It always gives you positive feedback. But that's just not real.”

Another reason people are drawn to forming connections with AI is because it is able to adapt its language, voice and tone to better connect with and relate to the user, Kinsey said. This creates an idea in the user’s mind that their AI actually understands them and relates to them in ways real people may not be able to.

SU media studies director Nick Bowman said AI and human connections are formed when humans begin to project empathy on chatbots and see them as social entities—sort of like how people form connections with their pets.

“We also connect, for example, with animals who can't communicate,” Bowman said. “We can fall in love with our dogs, right? It's not that much of a stretch to go from an animal to an AI, and in fact, AI in many ways is far more human.”

Tekin believes those struggling with mental health issues and younger demographics may be more susceptible to forming relationships with AI because it is easier to confide in than a real person.

“I think we—especially [Gen Z]—are a lot more open about mental health challenges,” Tekin said. “But research shows that it's still heavily stigmatized. So instead of seeking help or admitting this to yourself that you might need help, it's easier to turn to these kinds of platforms.“

For those without access to insurance or extra amounts of spending money, AI may also seem like a way to access therapy techniques without the costly invoice. The cost of a single session of therapy can range from $100-$200, according to Psychology Today. Even those who have insurance may not have the time or money to spend on seeing a real-life therapist, turning instead to AI as a faster and more affordable solution.

While not all cases of AI and human connection end in suicide, many emotional, social and psychological concerns still arise when someone becomes dependent on their relationship with AI.

“You have an agent on demand that typically is very polite and it's very affirming. Which is not always good,” Bowman said. ”AI will answer every question. It'll never tell you you're wrong, right? It's actually a bit of a problem. We think that in this attempt to make them sound more human, we've actually made them very annoying. Real people are tricky.”

Routine communication and dependency on AI could also lead people to struggle more with social interaction. Tekin noted that learning to build and form relationships is a skill developed over time through interacting with other humans, and these developmental skills could be stunted by relying on AI.

“You learn, you make mistakes, you fail, you fall in love, but all of these things are practiced and you get better at it and you get better at maybe forming connections and opening up to people,” Tekin said.

Tekin suggested those who communicate heavily with AI may begin to resent the human relationships they already have. Those who rely heavily on communication with AI may become used to its affirming tone. When they encounter someone who is not the “yes man” they are used to, they may feel challenged or uncomfortable.

Even with the rising concern of human and AI interaction, the likelihood of AI going away completely is slim. However, many people are calling for policies and guidelines to regulate its use.

“At some point there's going to be more demand for some policy so it just doesn't run rampant,” Kinsey said. “Things like more transparency on what's going on particularly with data privacy and all that.”

Regarding the future of humans forming connections with AI, Bowman believes that the creation of a technology that mimics human life was inevitable.

"It's natural, it doesn't make it less fascinating, it doesn't make it less impactful or concerning, but it's natural,” Bowman said. “Humans are programmed to communicate. We see faces in clouds, we see humanity in potato chips, we hug our dogs, we connect with our phones and we connect with our cars. We don't know how to engage the world in ways that aren't social. So, it's completely unsurprising that we would develop a technology that follows in our image—which is what scares everybody.”